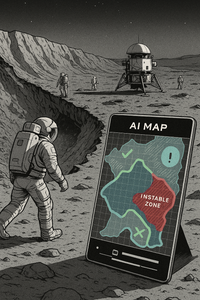

Example Scenario: A Faulty Image on the Moon

Image: AI generated with Copilot (Microsoft) "Astronauts at the crater rim, a green-marked zone, the unstable dust slope and the critical situation."

Initial Situation An international team is preparing an EVA (Extra-Vehicular Activity) near a crater area. Mission planning is based on an AI-generated map that visualizes resources (water ice deposits) and hazard zones (unstable regolith fields).

The Error

- The map shows a green-marked zone as a “safe path.”

- Due to missing semantic validation, a dust slope with high instability was falsely classified as walkable.

- The team relies on the visualization – semantic precision is lacking.

1. Operational Risks on the Moon

- Dust and regolith: Lunar dust is extremely fine, sharp-edged and electrostatic. It could damage equipment, impair visibility, and endanger health.

- Unstable terrain formations: Crater slopes and loose regolith fields could give way under load.

- Communication disruptions: Dust clouds could impair sensors and devices.

2. Diplomatic and Legal Dimension

- Resource claims: Water ice in polar regions is considered strategically important. If a map incorrectly depicts ownership or resource zones, it could trigger international tensions.

- Cultural sensitivity: Symbols or representations that distort religious or cultural meanings could be diplomatically sensitive.

3. Visuals as Operational Truth

- In spaceflight, visualizations are not mere “decoration” but action-guiding tools. Maps, diagrams, and symbols determine routes, resources, and safety.

- A faulty image could directly lead to wrong actions and thus to accidents or conflicts.

Consequence without the Framework

- Astronauts may enter the zone.

- The ground collapses, dust clouds impair visibility, communication devices are disrupted by particles.

- Life-threatening danger arises from oxygen loss and radiation exposure.

- Additionally: The map is shared publicly – other nations interpret the false depiction as a resource claim. A possible diplomatic conflict could escalate.

This is intended as a plausible projection of what could happen if semantic validation is missing.

Where Does the Semantic Integrity Framework Intervene?

Prevention through Semantic Validation

Auditable modules check the image data:

- Risk classes are automatically compared with predefined thresholds.

- Uncertainty encodings (e.g., hatching, color transparency) clearly show that the zone is not validated.

Role-based governance:

- The map should carry a responsibility label (“Geology team validated”).

- Every representation is linked to provenance chains – clearly traceable as to who reviewed the data.

Result

- The team immediately recognizes: The zone is unsafe and not approved.

- An alternative route is chosen, the mission remains safe.

- Publicly shared maps contain semantic markers that prevent misunderstandings.

- Instead of conflict, trust emerges, as the representation is transparent and verifiable.

A single faulty image on the Moon can endanger human lives and trigger international tensions. The Framework ensures that visuals are auditable, semantically precise and culturally respectful, transforming them from a risk into an operational backbone for safety and governance.

Image: AI generated with Copilot (Microsoft)

This contribution was authored by Birgit Bortoluzzi, strategic architect and certified Graduate Disaster Manager. The content reflects original interdisciplinary synthesis developed within the framework of the Geo-Resilience Initiative.